Wir arbeiten mit den neuesten Softwaretools und ‑techniken. Dabei entwickeln wir uns ständig weiter, um die digitale Erklärung von technischen Produkten für unsere Kunden zu optimieren. Hierfür nutzen wir die interdisziplinäre Zusammenarbeit mit Entwicklern, Universitäten und Forschungseinrichtungen.

Computer-Visualisierung

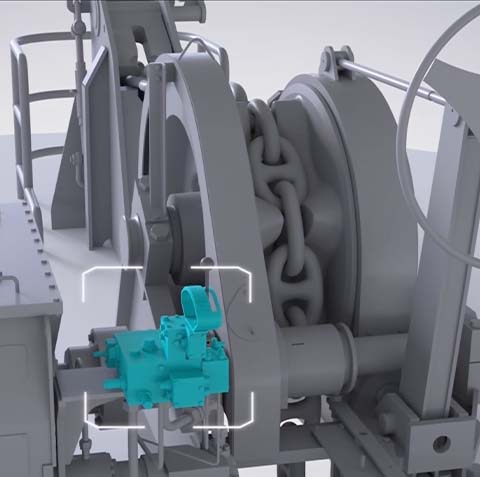

„Ein Bild sagt mehr als 1.000 Worte“. Bildhafte Darstellungen (oder Computer-Visualisierungen) stellen die universellste Möglichkeit der Erklärung dar. Die Basis unserer Arbeit liegt im Aufbau eines „digital twin“ (ein digitaler Zwilling), der auf der Basis von Konstruktionsdaten in unserem System aufgebaut wird. Mithilfe der 3D-Technik sind wir in der Lage, jedes gewünschte Datenformat oder jede gewünschte Bildgröße und Auflösung verlustfrei auszuspielen. Bilder können selbstverständlich auch aus jeder Animationssequenz einer Computeranimation ausgespielt werden.

Computeranimation

Die Computeranimation überschreitet die Grenzen des Real-Filmes deutlich und bildet daher eine ideale Verbesserung in der Präsentation Ihres Produktes. Auf der Grundlage des erzeugten „digital twin“ sind wir in der Lage, alle Bewegungen oder Veränderungen Ihres Produktes fotorealistisch zu animieren. Das digitale Modell kann aufgeschnitten, in Teilen ausgeblendet und in jede Umgebung gesetzt werden. Wir definieren die Computeranimation als digitalen Film, der in jedem gewünschten Format und jeder Größe ausgespielt werden kann. Die Vertonung mit Musik und einem Sprecher sorgen für eine überzeugende Präsentation.

Real-Time 3D

Die reale Bedienung eines 3D-Modells mit der Maus oder einem anderen Eingabegerät ist eine komfortable Möglichkeit, Ihrem Kunden Ihr Produkt virtuell in die Hand zu geben und dieses auszuprobieren. Wie wird der Verschluss geöffnet, was passiert, wenn ich diesen Knopf drücke oder die Entfernung eines Bauteils aus der Maschine: Lassen Sie Ihren Kunden Ihr Produkt erfahren. Lassen Sie ihn/sie spielerisch den Umgang mit Ihrem Produkt testen. Auf der Basis des digitalen Zwillings erzeugen wir funktionale Modelle, die sich wie ein Simulator bedienen lassen. Dieses realisieren wir im Browser, im Web oder auf einem mobilen Endgerät.

Virtual Reality

Der nächste Schritt in die virtuelle Welt: Bewegen Sie sich in Ihrem Produkt. Auf Basis des digitalen Zwillings können wir Ihren Kunden in die Lage versetzen, sich in einem 3D-Modell zu bewegen. Setzen Sie sich in Ihre Planung, durchlaufen Sie Ihr Baugebiet oder trainieren Sie virtuell in einer Trainingsumgebung. Wir produzieren Lösungen für den Pc und mobile Endgeräte, aber auch für VR-Brillen. Lösungen sind hierbei sowohl online, als auch offline möglich.

Augmented Reality

Bringen Sie Ihren virtuellen digitalen Zwilling in die reale Welt, stellen Sie Ihre Planung vor sich auf den Schreibtisch oder laufen Sie um Ihre Entwicklung herum und betrachten Sie diese von allen Seiten. Über eine leistungsfähige Hardware, wie eine AR-Brille, aber auch über moderne Smartphones oder Tabletts können wir die reale und die virtuelle Welt komponieren. Microsoft prägt hierfür zunehmend den Begriff „Mixed Reality“, der zum Ausdruck bringt, wie die virtuelle Welt, die reale Welt und die unterschiedlichen Endgeräte immer näher zusammenrücken. Digitale Informationen können somit in die reale Welt an die Maschine direkt herangebracht werden. Lesen Sie Werte an der Maschine direkt ab, lassen Sie die Maschine erklären, wie sie repariert wird oder überprüfen Sie Ihre Konstruktion in der Realität.